Aws Lake Formation 2023 Year in Review

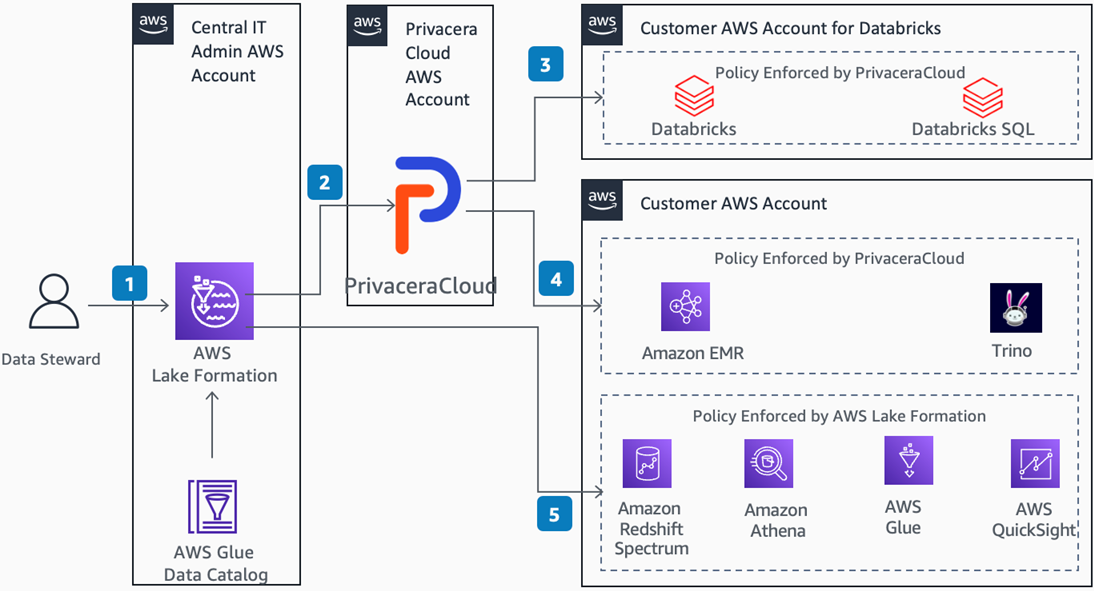

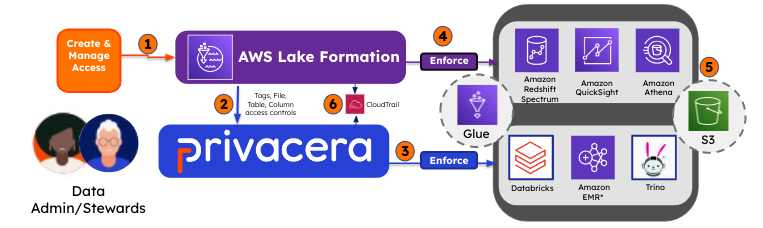

Discover the latest advancements in AWS Lake Formation and Glue Data Catalog! Dive into new features introduced in 2023, revolutionizing data governance for Amazon S3 data lakes. Read on to simplify and enhance your data management.

Embark on a data-driven journey with AWS Lake Formation and Glue Data Catalog! Read what we released in 2023 to help you innovate and simplofy your Data Governance with Lake Formation. This summary recaps a year of groundbreaking enhancements. Fasten your seatbelts for a journey that unveils the future of seamless data management!