The pattern #

Andrej Karpathy posted a gist about building personal knowledge bases with LLMs. 41,000 people bookmarked it. Most of them might never build one.

The idea is straightforward: instead of RAG (upload files, retrieve chunks, generate answers from scratch every time), you have the LLM build and maintain a persistent wiki. New sources get integrated into existing pages. Cross-references happen automatically. Contradictions get flagged. The knowledge compounds instead of being rediscovered on every query.

There are three layers (the immutable raw sources, the LLM-maintained wiki, and a schema file that tells the LLM how to behave), three operations (ingest to process a source into the wiki, query to ask questions against it, and lint to health-check the whole thing, and a single Obsidian frontend. You browse the wiki with graph view, wikilinks, and search while the LLM does the filing and cross-referencing.

The core insight is about maintenance. Humans abandon wikis because the bookkeeping grows faster than the value. LLMs don’t get bored, don’t forget to update a cross-reference, and can touch 15 files in one pass. The wiki stays maintained because the cost of maintenance is near zero.

If you haven’t read Karpathy’s original post, go do that first. Everything below assumes you know the pattern.

Two implementations #

Within weeks of Karpathy’s post, two detailed implementations appeared. They take very different approaches to the same idea.

The Google Doc: transparent and manual #

raycfu’s Google Doc is a step-by-step tutorial. Create a vault. Make these folders. Paste this schema. Run this command. It includes Karpathy’s original prompt verbatim and walks you through the entire setup process.

The approach is deliberately transparent. Every operation is a copy-paste Claude Code command with --allowedTools Bash,Write,Read flags. You see exactly what the LLM is being told to do. There’s no abstraction layer so you’re running raw prompts and watching the results.

The doc covers the full lifecycle: vault creation, schema setup, source ingestion, querying, health checks, and even optional automations like morning briefings and call transcript processing. It’s honest about being abstract. The author explicitly says this is the idea, not a specific implementation, and that your LLM should help you figure out the details.

claude-obsidian: packaged and opinionated #

AgriciDaniel’s claude-obsidian is a plugin with 10 specialized skills, a hot cache system, and multi-agent support. Where the Google Doc gives you commands to copy-paste, claude-obsidian gives you skills that handle the complexity behind the scenes.

The skill list covers the full surface area: wiki for setup, wiki-ingest for source processing, wiki-query for questions, wiki-lint for health checks, save for filing conversations, autoresearch for autonomous web research loops, canvas for visual boards, defuddle for stripping web page clutter, and obsidian-markdown and obsidian-bases for syntax reference.

The hot cache (hot.md) is a genuinely clever addition. It stores ~500 words of recent session context so the next conversation doesn’t start from zero. At ~500 tokens to read, it eliminates the 2,000-3,000 tokens you’d otherwise spend re-establishing context. That’s a good trade.

Where they agree #

Despite the different approaches, both implementations land on the same architecture:

- Three layers: raw sources → wiki → schema. Immutable sources, LLM-maintained wiki, a configuration file that defines behavior.

- Three operations: ingest, query, lint. Process sources, ask questions, health-check.

- Knowledge compounds: the 50th source is dramatically more useful than the first because it gets cross-referenced against everything already in the wiki.

- Obsidian as the frontend: graph view, wikilinks, full-text search. The LLM writes; you browse.

- The LLM does the maintenance: humans curate sources and ask questions. The LLM does the filing, cross-referencing, and bookkeeping.

This convergence is telling. Two independent implementations arriving at the same structure suggests the pattern is sound.

Where they differ #

Transparency vs. abstraction #

The Google Doc shows you every command. You understand exactly what’s happening because you’re the one running it. This is great for learning the pattern and terrible for daily use because nobody wants to paste multi-line prompts with --allowedTools flags every time they clip an article.

claude-obsidian abstracts the operations into skills. You say “ingest this” and the skill handles the rest. This is great for daily use and harder to debug when something goes wrong. You’re trusting the skill to do the right thing.

Honesty vs. marketing #

The Google Doc is refreshingly honest. It says “this document is intentionally abstract” and “the right way to use this is to share it with your LLM agent and work together to instantiate a version that fits your needs.” It knows what it is: a pattern description, not a product.

The claude-obsidian blog post reads more like a marketing piece. Market size statistics, McKinsey citations, radar charts comparing itself to competitors, a “358 GitHub stars” callout, and phrases like “the most complete” implementation. The engineering underneath is solid, but you have to read past the positioning to find it.

What claude-obsidian adds that’s genuinely useful #

Credit where it’s due because claude-obsidian introduces several ideas that the Google Doc doesn’t cover:

- Delta tracking: a manifest file that hashes sources so you don’t re-process unchanged files. Simple and effective.

- Contradiction callouts:

[!contradiction]callouts on wiki pages when new sources conflict with existing claims. The LLM flags both sides with sources instead of silently overwriting. - Token discipline tiers: quick/standard/deep query modes that read progressively more of the wiki. Quick mode reads only the hot cache and index (~1,500 tokens). Standard reads 3-5 pages (~3,000 tokens). Deep reads everything. This matters when you’re paying per token.

- Lint categories: eight specific checks (orphan pages, dead links, stale claims, missing pages, missing cross-references, frontmatter gaps, empty sections, stale index entries) instead of a generic “health check.”

- Dual ingest paths: distinguishing between external sources (copy to

.raw/) and user notes (read in place, don’t move). This is important because your meeting notes inProjects/shouldn’t get copied somewhere else.

What I didn’t need in claude-obsidian #

Not everything in the plugin earns its complexity:

- DragonScale address system: a semantic coordinate system for organizing wiki pages. It sounds impressive but for me added a layer of abstraction that doesn’t solve a problem Obsidian’s native folder structure and wikilinks don’t already handle.

- Community footer and marketing hooks: “Join 2,800+ AI Marketing Builders” and links to Skool communities. Fine for the author’s business, but it leaks into the plugin’s identity in a way that feels off for a knowledge management tool.

- Canvas complexity: the canvas skill is interesting but adds significant surface area for a feature most users won’t need in their first 50 sessions.

What I wanted that neither had #

After spending time with both implementations to try them out and see how they worked, I had a clear picture of what was missing.

Lifecycle management #

Neither implementation answers the question: what happens when a project ends?

You’re working on a customer engagement for three months. You ingest meeting notes, architecture docs, decision records. The wiki builds up a rich picture of that customer’s environment. Then the project wraps up. Now what?

The wiki pages are still useful, for instance the knowledge about that customer’s architecture doesn’t expire. But the project notes, the meeting transcripts, and the working documents should move out of your active workspace. Neither implementation has a concept of “done.”

PARA solves this. It’s an organizational system by Tiago Forte that sorts everything into four buckets:

- Projects: active work with a deadline and a clear outcome. A customer engagement, a blog series, a certification study plan. These end.

- Areas: ongoing responsibilities with no end date. Your role as an SA, your health, your team’s operational standards. These continue.

- Resources: reference material on topics you’re interested in. This is where the wiki lives because it’s knowledge that’s useful regardless of which project it came from.

- Archive: completed or inactive items from the other three categories. Done projects, paused areas, outdated resources.

The power of PARA for a knowledge base is that it makes lifecycle explicit. When a project wraps up, you move the folder to Archive. The wiki knowledge in Resources stays put because it was never tied to the project folder in the first place, just linked to it. You don’t lose knowledge when work ends. You don’t clutter your active workspace with finished projects. And you always know where to find things based on how actionable they are right now.

Dual ingest that respects ownership #

The Google Doc treats everything as a raw source that gets dumped into a folder. claude-obsidian distinguishes between source types but doesn’t fully separate the paths.

I wanted two clear paths: external sources (articles, PDFs, transcripts from outside) go to .raw/ as immutable archives. My own notes (meeting notes, project docs, things I wrote) stay where they are. The wiki reads them in place and creates knowledge pages that link back. If I later move the project to Archive, Obsidian updates the wikilinks automatically.

This matters because my notes are living documents. I edit them. I add to them after meetings. Copying them to .raw/ would create a stale snapshot.

Tool-agnostic skills #

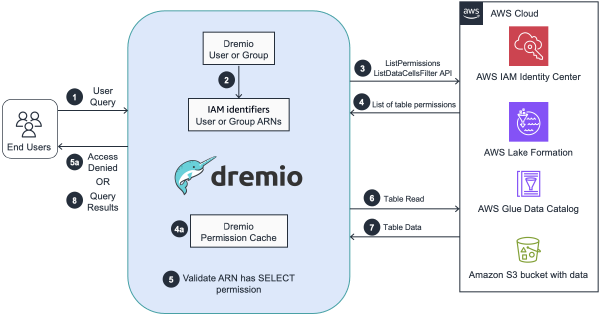

The Google Doc is built around Claude Code commands. claude-obsidian ships with a multi-agent setup script that supports Claude Code, Gemini CLI, Codex CLI, and Cursor but the skills themselves are deeply tied to Claude Code’s plugin architecture.

I use Kiro. I wanted skills that work as markdown files loaded on demand, not as plugins tied to a specific tool’s ecosystem. If I switch tools next year, the skills should come with me.

No unnecessary complexity #

I didn’t need DragonScale coordinates, semantic tiling, or canvas workflows. I needed ingest, query, lint, save, and autoresearch (the core operations) done well for me.

What I built #

I took the best ideas from both implementations and ported them into Kiro-compatible skills. The result is a set of seven skills that implement the LLM Wiki pattern with PARA methodology on top.

PARA at the top level #

The vault structure uses PARA for lifecycle management and the wiki pattern for the knowledge graph inside Resources. The two systems complement each other: PARA answers “how actionable is this right now?” while the wiki answers “how does this connect to everything else I know?”

vault/

├── Inbox/ # unprocessed material

├── Projects/ # active work with deadlines

├── Areas/ # ongoing responsibilities

├── Resources/

│ └── wiki/ # AI-maintained knowledge graph

│ ├── index.md # master catalog

│ ├── log.md # chronological record

│ ├── hot.md # session context cache

│ ├── sources/ # one page per ingested source

│ ├── entities/ # people, orgs, products

│ ├── concepts/ # ideas, patterns, frameworks

│ ├── domains/ # top-level topic areas

│ ├── comparisons/ # side-by-side analyses

│ └── questions/ # filed answers

├── Archive/ # completed projects

└── .raw/ # immutable external sourcesA concrete example: I’m working on a customer engagement in Projects/Customer-X/. I ingest my meeting notes and the wiki creates entity pages for the people I met, concept pages for the architecture patterns we discussed, and source summaries that link back to my notes. Three months later, the engagement wraps up. I move Projects/Customer-X/ to Archive/Customer-X/. The wiki pages in Resources/wiki/ stay exactly where they are so I can easily find the entity page for that customer, the concept page for their architecture pattern, the connections to other customers using similar approaches. Obsidian updates the wikilinks automatically. Knowledge persists; project context is archived.

Dual ingest with clear ownership #

External sources (PDFs, articles, URLs) get copied to .raw/ with a manifest that tracks hashes, ingest dates, and which wiki pages were created. This is straight from claude-obsidian’s delta tracking. It works well and I kept it.

User notes in Projects/, Areas/, or Inbox/ get read in place. The wiki creates knowledge pages that link back to the original note’s location. The original is never copied or moved.

The detection is simple: if the path is outside the vault or is a URL, it’s external. If it’s inside Projects/, Areas/, or Inbox/, it’s a user note. If it’s ambiguous, the skill asks.

What I kept from claude-obsidian #

- Hot cache:

hot.mdwith ~500 words of recent context, updated after every operation. The token economics are too good to skip. - Delta tracking:

.raw/.manifest.jsonwith source hashes. No re-processing unchanged files. - Contradiction callouts:

[!contradiction]on both the existing page and the new source. Flag, don’t overwrite. - Token discipline: quick/standard/deep query modes. Most questions don’t need the full wiki.

- Lint categories: eight specific checks with severity levels and suggested fixes.

- Defuddle integration: strip web page clutter before ingesting URLs. Saves 40-60% tokens on typical articles.

What I stripped #

- DragonScale: gone. Obsidian’s folder structure and wikilinks handle organization fine.

- Canvas as a core skill: still available but not part of the core workflow.

- Community/marketing hooks: obviously.

- Tool-specific plugin architecture: everything is a markdown SKILL.md file. Kiro loads them on demand. Zero overhead when not in use to save on tokens.

What I added #

- PARA lifecycle: the missing piece. Projects end. Knowledge persists.

- Vault scaffolding: I expanded the “scaffold” command to create the full PARA + wiki structure, domain pages, templates, CSS snippets for graph view colors, and an AGENTS.md schema file.

- Cross-project referencing: any project can read the wiki by pointing to the vault path. Hot cache first (~500 tokens), then index (~1,000 tokens), then drill into specific pages. Token-efficient by default.

- Autoresearch with constraints: max 3 rounds, max 15 pages per session, prefer primary sources. The research agent knows when to stop.

The takeaway #

If you’re starting from zero, read the Google Doc first. It communicates the pattern clearly without any abstraction getting in the way. You’ll understand what the LLM is doing and why.

If you want to see what a full implementation looks like, read through claude-obsidian’s skills. Focus on the engineering with delta tracking, contradiction detection, token discipline tiers, and the lint categories. These are cleverly built skills that are all worth studying.

Neither handles the lifecycle problem. What happens when a project ends? What happens when knowledge from three different projects needs to coexist without cluttering your active workspace? PARA solves that, and it’s the piece I couldn’t find in either implementation.

In a future post, I’ll walk through the full implementation, how the skills work, how PARA and the wiki interact in practice, and how a vault looks like after 30+ sources.